LLMs Continue to Raise Significant Privacy Issues

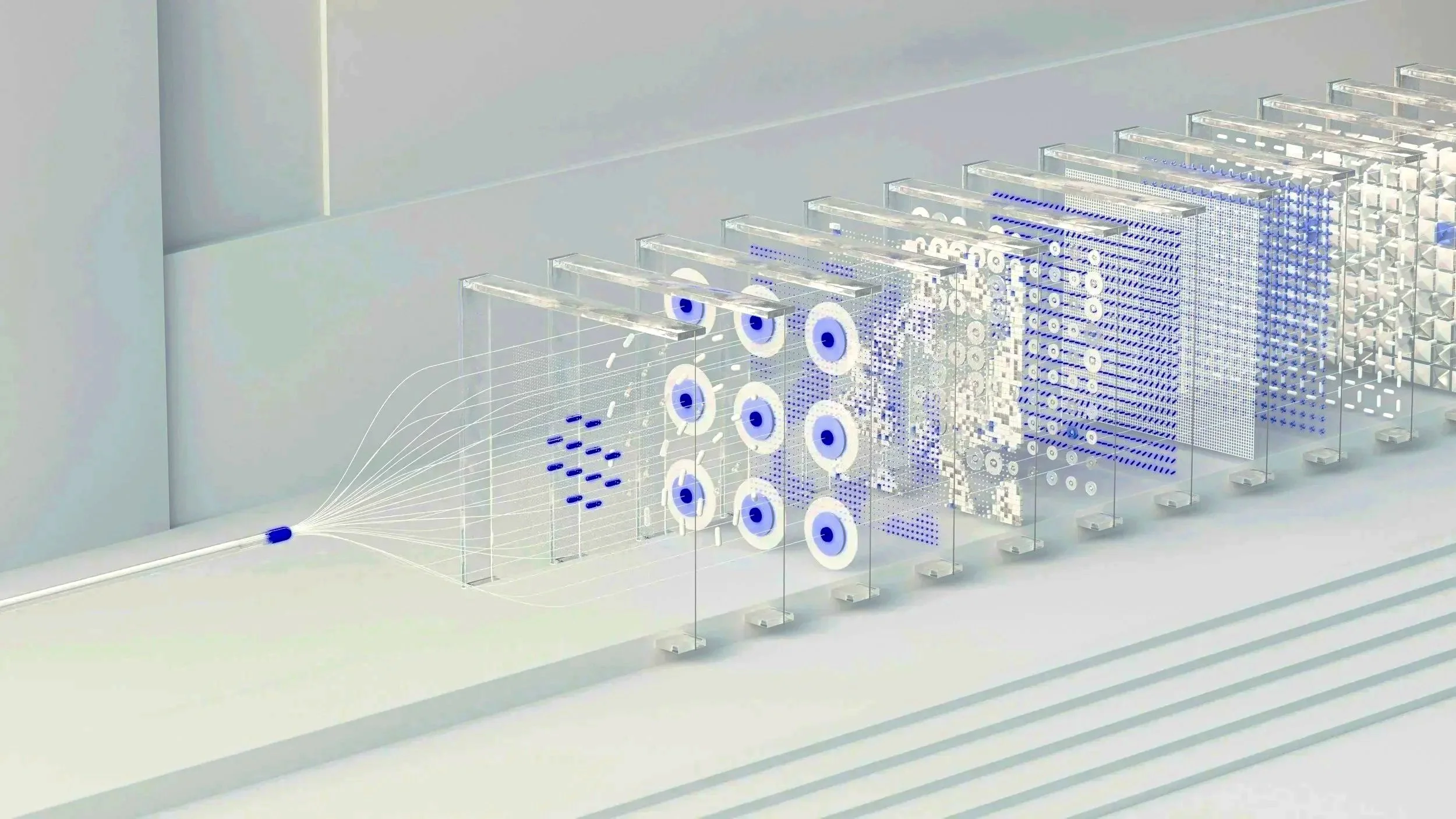

Despite LLM refinement in recent years, models are still significantly susceptible to privacy information leakage via model inversion intrusion, training data extraction, and membership inference attacks (MIAs).

Abstract: This survey offers a comprehensive overview of privacy risks associated with LLMs and examines current solutions to mitigate these challenges. First analyzed are privacy leakage and attacks in LLMs, focusing on how these models unintentionally expose sensitive information. Next, a review of existing privacy protection measures against such risks, such as inference detection, federated learning, backdoor mitigation, and confidential computing is conducted with an assessment of their effectiveness in preventing privacy leakage.

A survey on privacy risks and protection in large language models

Chen, K., Zhou, X., Lin, Y. et al. A survey on privacy risks and protection in large language models. J. King Saud Univ. Comput. Inf. Sci.37, 163 (2025). https://doi.org/10.1007/s44443-025-00177-1

________________________________

Disclaimer: This blog post is provided for informational purposes only and does not constitute legal advice. The linked article is the work of its respective author(s) and publication, with full attribution provided. BAYPOINT LAW is not affiliated with the author(s) or publication; it is shared solely as a matter of professional interest.